My mind is still blown on why people are so interested in spending 2x the cost of the entire machine they are playing on AND a hefty power utility bill to run these awful products from Nvidia. Generational improvements are minor on the performance side, and fucking AWFUL on the product and efficiency side. You’d think people would have learned their lessons a decade ago.

they pay because AMD (or any other for that matter) has no product to compete with a 5080 or 5090

That’s exactly it, they have no competition at the high end

Because they choose not to go full idiot though. They could make their top-line cards to compete if they slam enough into a pipeline and require a dedicated PSU to compete, but that’s not where their product line intends to go. That’s why it’s smart.

For reference: AMD has the most deployed GPUs on the planet as of right now. There’s a reason why it’s in every gaming console except Switch 1/2, and why OpenAI just partnered with them for chips. The goal shouldn’t just making a product that churns out results at the cost of everything else does, but to be cost-effective and efficient. Nvidia fails at that on every level.

this openai partnership really stands out, because the server world is dominated by nvidia, even more than in consumer cards.

Yup. You want a server? Dell just plain doesn’t offer anything but Nvidia cards. You want to build your own? The GPGPU stuff like zluda is brand new and not really supported by anyone. You want to participate in the development community, you buy Nvidia and use CUDA.

Fortunately, even that tide is shifting.

I’ve been talking to Dell about it recently, they’ve just announced new servers (releasing later this year) which can have either Nvidia’s B300 or AMD’s MI355x GPUs. Available in a hilarious 19" 10RU air-cooled form factor (XE9685), or ORv3 3OU water-cooled (XE9685L).

It’s the first time they’ve offered a system using both CPU and GPU from AMD - previously they had some Intel CPU / AMD GPU options, and AMD CPU / Nvidia GPU, but never before AMD / AMD.

With AMD promising release day support for PyTorch and other popular programming libraries, we’re also part-way there on software. I’m not going to pretend like needing CUDA isn’t still a massive hump in the road, but “everyone uses CUDA” <-> “everyone needs CUDA” is one hell of a chicken-and-egg problem which isn’t getting solved overnight.

Realistically facing that kind of uphill battle, AMD is just going to have to compete on price - they’re quoting 40% performance/dollar improvement over Nvidia for these upcoming GPUs, so perhaps they are - and trying to win hearts and minds with rock-solid driver/software support so people who do have the option (ie in-house code, not 3rd-party software) look to write it with not-CUDA.

To note, this is the 3rd generation of the MI3xx series (MI300, MI325, now MI350/355). I think it might be the first one to make the market splash that AMD has been hoping for.

AMD’s also apparently unifying their server and consumer gpu departments for RDNA5/UDNA iirc, which I’m really hoping helps with this too

I know Dell has been doing a lot of AMD CPUs recently, and those have definitely been beating Intel, so hopefully this continues. But I’ll believe it when I see it. Often, these things rarely pan out in terms of price/performance and support.

yeah, I helped raise hw requirements for two servers recently, an alternative to nvidia wasn’t even on the table

Actually…not true. Nvidia recently became bigger in the DC because of their terrible inference cards being bought up, but AMD overtook Intel on chips with all major cloud platforms last year, and their Xilinix chips are slowly overtaking the sales of regular CPUs for special purposes processing. By the end of this year, I bet AMD will be the most deployed brand in datacenters globally. FPGA is the only path forward in the architecture world at this point for speed and efficiency in single-purpose processing. Nvidia doesn’t have a competing product.

we’re talking GPUs, idk why you’re bringing FPGA and CPUs in the mix

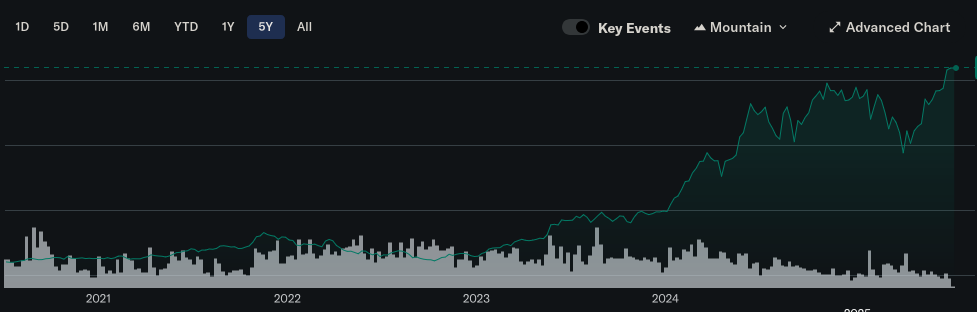

Then why does Nvidia have so much more money?

Because of vendor lock in

See the title of this very post you’re responding to. No, I’m not OP lolz

They have so much money because they’re full of shit? Doesn’t make much sense.

Stock isnt money in the bank.

No one said anything about stock.

He’s not OP. He’s just another person…

Unfortunately, this partnership with OpenAI means they’ve sided with evil and I won’t spend a cent on their products anymore.

enjoy never using a computer again i guess?

Oh so you support grifting off the public domain? Maybe grow some balls instead of taking the status quo for granted.

I have overclocked my AMD 7900XTX as far as it will go on air alone.

Undervolted every step on the frequency curve, cranked up the power, 100% fan duty cycles.

At it’s absolute best, it’s competitive or trades blows with the 4090D, and is 6% slower than the RTX 4090 Founder’s Edition (the slowest of the stock 4090 lineup).

The fastest AMD card is equivalent to a 4080 Super, and the next gen hasn’t shown anything new.

AMD needs a 5090-killer. Dual socket or whatever monstrosity which pulls 800W, but it needs to slap that greenbo with at least a 20-50% lead in frame rates across all titles, including raytraced. Then we’ll see some serious price cuts and competition.

And/or Intel. (I can dream, right?) Hell, perform a miracle Moore Threads!

What do you even need those graphics cards for?

Even the best games don’t require those and if they did, I wouldn’t be interested in them, especially if it’s an online game.

Probably only a couple people would be playing said game with me.

Once the 9070 dropped all arguments for Nvidia stopped being worthy of consideration outside of very niche/fringe needs.

Got my 9070XT at retail (well retail + VAT but thats retail for my country) and my entire PC costs less than a 5090.

Yeah I got a 9070 + 9800x3d for around $1100 all-in. Couldn’t be happier with the performance. Expedition 33 running max settings at 3440x1440 and 80-90fps

But your performance isn’t even close to that of a 5090…….

80-90 fps @ 1440 isn’t great. That’s like last gen mid tier nvidia gpu performance.

Not 1440 like you’re thinking. 3440x1440 is 20% more pixel to render than standard 2560x1440’s. It’s a WS. And yes at max settings 80-90fps is pretty damn good. It regularly goes over 100 in less busy environments.

And yeah it’s not matching a 5090, a graphics card that costs more than 3x mine and sure as hell isn’t giving 3x the performance.

You’re moving the goalposts. My point is for 1/4th the cost you’re getting 60-80% of the performance of overpriced, massive, power hungry Nvidia cards (depending on what model you want to compare to). Bang for buck, AMD smokes Nvidia. It’s not even close.

Unless cost isn’t a barrier to you or you have very specific needs they make no sense to buy. If you’ve got disposable income for days then fuck it buy away.

Better bang for your buck, but way less bang and not as impressive of a bang.

Not all of us can afford to spend $3000 for a noticeable but still not massive performance bump over a $700 option. I don’t really understand how this is so difficult to understand lol. You also have to increase the rest of your machine cost for things like your PSU, because the draw on the 5xxx series is cracked out. Motherboard, CPU, all of that has to be cranked up unless you want bottlenecks. Don’t forget your high end 165hz monitor unless you want to waste frames/colors. And are we really going to pretend after 100fps the difference is that big of a deal?

Going Nvidia also means unless you want to be fighting your machine all the time, you need to keep a Windows partition on your computer. Have fun with that.

At the end of the day buy what you want dude, but I’m pulling down what I said above on a machine that cost about $1700. Do with that what you will

I assume people mean 3440x1440 when they say 1440 as it’s way more common than 2560x1440.

Your card is comparable to a 5070, which is basically the same price as yours. There’s no doubt the 5080 and 5090 are disappointing in their performance compared to these mid-high cards, but your card can’t compete with them and nvidia offer a comparable card at the same price point as AMDs best card.

Also the AMD card uses more power than the nvidia equivalent (9700xt vs 5070).

I assume people mean 3440x1440 when they say 1440 as it’s way more common than 2560x1440.

Most people do not use WS as evidenced by the mixed bag support it gets. 1440 monitors are by default understood to be 2560x1440p as it’s 16:9 which is still considered the “default” by the vast majority of businesses and people alike. You may operate as if most people using 1440+ are on WS but that’s a very atypical assumption.

Raytracing sure but otherwise the 4090 is actually better than the 5070 in many respects. So you’re paying a comparable price for Raytracing and windows dependency, which if that is important to you then go right ahead. Ultimately though my point is that there is no point in buying the insanely overpriced Nvidia offerings when you have excellent AMD offerings for a fraction of the price that don’t have all sorts of little pitfalls/compromises. The Nvidia headaches are worth it for performance, which unless you 3-4x your investment you’re not getting more of. So the 5070 is moot.

I’m not sure what you’re comparing at the end unless you meant a 9070XT which I don’t use/have and wasn’t comparing.

Well, to be fair the 10 series was actually an impressive improvement to what was available. Since then I switched to AMD for better SW support. I know since then the improvements have dwindled.

AMD is at least running the smart game on their hardware releases with generational leaps instead of just jacking up power requirements and clock speeds as Nvidia does. Hell, even Nvidia’s latest lines of Jetson are just recooked versions from years ago.

AMD is at least running the smart game on their hardware releases with generational leaps instead of just jacking up power requirements and clock speeds as Nvidia does.

AMD could only do that because they were so far behind. GPU manufacturers, at least nvidia, are approaching the limits of what they can do with current fabrication technology other than simply throwing “more” at it. Without a breakthrough in tech all they can really do is jack up power requirements and clock speeds. AMD will be there soon too.

But but but but but my shadows look 3% more realistic now!

The best part is, for me, ray tracing looks great. When I’m standing there and slowly looking around.

When I’m running and gunning and shits exploding, I don’t think the human eye is even capable of comprehending the difference between raster and ray tracing at that point.

Yeah, that’s what’s always bothered me about the drive for the highest-fidelity graphics possible. In motion, those details are only visible for a frame or two in most cases.

For instance, some of the PC mods I’ve seen for Cyberpunk 2077 look absolutely gorgeous… in screenshots. But once you get into a car and start driving or get into combat, it looks nearly indistinguishable from what I see playing the vanilla game on my PS5.

It absolutely is, because Ray tracing isn’t just about how precise or good the reflections/shadows look, it’s also about reflecting/getting shadows from things that are outside of your field of view. That’s the biggest difference.

One of the first “holy shit!” moments for me was playing doom I think it was, and walking down a corridor and being able to see that there were enemies around the corner by seeing their reflection on the opposite wall. That’s never been possible before, and it’s only possible thanks to raytracing. Same with being able to see shadows from enemies that are behind you out of screen to the side.

But we can do these reflections with raster already? And you skipped my point. You mention walking down a hall. I said when I’m running and gunning and shits exploding. You’re turning and focusing on shooting an enemy or something, there’s no way I’m noticing or paying attention to a reflection of an enemy down a hall.

No we can’t.

I was running and gunning - it was doom 2016! Maybe you don’t pay much attention to your surroundings in games, but others do. Maybe you don’t play competitive online shooters at a high level like others do? That’s the sort of thing that separates the best from the rest.

I’m sorry, I would be really impressed if you shit a rocket at a demon in doom eternal and it exploded and all kinds of shits flying around but you noticed the reflection of another around a corner on a shiny wall at the same time. There might be like 4 frames of this captured.

Yes, I play a lot of FPS games. I have an ultra wide monitor and 144Hz.

Not to mention, raster is capable of doing these things, it’s just not “as realistic”. I’ve watched several comparison videos.

Well you’d be impressed then :)

Playing a lot of fps games doesn’t mean you can play at a high level. Having an ultrawide monitor and 144hz is completely irrelevant lol. There are people with 4K 200+hz monitors and 5090s who are trash at competitive play.

Raster isn’t capable of these things, that’s why it’s never been done until raytracing came along. Rasterization rasters what is on the screen, nothing outside of that.

Cause numbers go brrrrrrrrr

If you’re on Windows it’s hard to recommend anything else. Nvidia has DLSS supported in basically every game. For recent games there’s the new transformer DLSS. Add to that ray reconstruction, superior ray tracing, and a steady stream of new features. That’s the state of the art, and if you want it you gotta pay Nvidia. AMD is about 4 years behind Nvidia in terms of features. Intel is not much better. The people who really care about advancements in graphics and derive joy from that are all going to buy Nvidia because there’s no competition.

First, DLSS is supported on Linux.

Second, DLSS is kinda bullshit. The article goes into details that are fairly accurate.

Lastly, AMD is at parity with Nvidia with features. You can see my other comments, but AMD’s goal isn’t selling cards for gamers. Especially ones that require an entire dedicated PSU to power them.

Don’t you mean NVidia’s goal isn’t selling cards for gamers?

No. AMD. See my other comments in this thread. Though they are in every major gaming console, the bulk of AMD sales are aimed at the datacenter.

Nvidia cards don’t require their own dedicated PSU, what on earth are you talking about?

Also DLSS is not “kinda bullshit”. It’s one of the single biggest innovations in the gaming industry in the last 20 years.

Low rent comment.

Second: you apparently are unaware, so just search up the phrase, but as this article very clearly explains…it’s shit. It’s not innovative, interesting, or improving performance, it’s a marketing scam. Games would be run better and more efficiently if you just lower the requirements. It’s like saying you want food to taste better, but then they serve you a vegan version of it. AMD’s version is technically more useful, but it’s still a dumb trick.

What exactly am I supposed to be looking at here? Do you think that says that the GPUs need their own PSUs? Do you think people with 50 series GPUs have 2 PSUs in their computers?

It’s not innovative, interesting, or improving performance, it’s a marketing scam. Games would be run better and more efficiently if you just lower the requirements.

DLSS isn’t innovative? It’s not improving performance? What on earth? Rendering a frame at a lower resolution and then using AI to upscale it to look the same or better than rendering it at full resolution isn’t innovative?! Getting an extra 30fps vs native resolution isn’t improving performance?! How isn’t it?

You can’t just “lower the requirements” lol. What you’re suggesting is make the game worse so people with worse hardware can play at max settings lol. That is absolutely absurd.

Let me ask you this - do you think that every new game should still be being made for the PS2? PS3? Why or why not?

Like I said…you don’t know what DLSS is, or how it works. It’s not using “AI”, that’s just marketing bullshit. Apparently it works on some people 😂

You can find tons of info on this (why I told you to search it up), but it uses rendering tables, inference sorting, and pattern recognition to quickly render scenes with other tricks that video formats have used for ages to render images at a higher resolution cheaply from the point of view of the GPU. You render a scene a dozen times once, then it regurgitates those renders from memory again if they are shown before ejected from cache on the card. It doesn’t upsample, it does intelligently render anything new, and there is no additive anything. It seems you think it’s magic, but it’s just fast sorting memory tricks.

Why you think it makes games better is subjective, but it solely works to run games with the same details at a higher resolution. It doesn’t improve rendered scenes whatsoever. It’s literally the same thing as lowering your resolution and increasing texture compression (same affect on cached rendered scenes), since you bring it up. The effect on the user being a higher FPS at a higher resolution which you could achieve by just lowering your resolution. It absolutely does not make a game playable while otherwise unplayable by adding details and texture definition, as you seem to be claiming.

Go read up.

I 100% know what DLSS is, though by the sounds of it you don’t. It is “AI” as much as any other thing is “AI”. It uses models to “learn” what it needs to reconstruct and how to reconstruct it.

What do you think DLSS is?

You render a scene a dozen times once, then it regurgitates those renders from memory again if they are shown before ejected from cache on the card. It doesn’t upsample, it does intelligently render anything new, and there is no additive anything. It seems you think it’s magic, but it’s just fast sorting memory tricks.

This is blatantly and monumentally wrong lol. You think it’s literally rendering a dozen frames and then just picking the best one to show you out of them? Wow. Just wow lol.

It absolutely does not make a game playable while otherwise unplayable by adding details and texture definition, as you seem to be claiming.

That’s not what I claimed though. Where did I claim that?

What it does is allow you to run a game at higher settings than you could usually at a given framerate, with little to no loss of image quality. Where you could previously only run a game at 20fps at 1080p Ultra settings, you can now run it at 30fps at “1080p” Ultra, whereas to hit 30fps otherwise you might have to drop everything to Low settings.

Go read up.

Ditto.

It actually doesn’t do what you said anymore, not since an update in like 2020 or something. It’s an AI model now

Folks, ask yourselves, what game is out there that REALLY needs a 5090? If you have the money to piss away, by all means, it’s your money. But let’s face it, games have plateaued and VR isn’t all that great.

Nvidia’s market is not you anymore. It’s the massive corporations and research firms for useless AI projects or number crunching. They have more money than all gamers combined. Maybe time to go outside; me included.

Oh, VR is pretty neat. It sure as shit don’t need no $3000 graphics card though.

Cyberpunk 2077 with the VR mod is the only one I can think of. Because it’s not natively built for VR you have to render the world separately for each eye leading to a halving of the overall frame rate. And with 90 fps as the bare minimum for many people in VR you really don’t have a choice but to use the 5090.

Yeah it’s literally only one game/mod, but that would be my use case if I could afford it.

Also the Train World Sim Series. Those games make my tower complain, and my laptop give up.

Only Cyberpunk 2077 in path tracing. The only game i have not played until i can run it on ultra settings. But for that amount of money, i better wait until the real 2077 to see it happen.

The studio has done a great job. You most certainly have heard it already, but I am willing to say it again: the game is worth playing with whatever quality you can afford, save stutter-level low fps - the story is so touching it outplays graphics completely (though I do share the desire to play it on ultra settings - will do one day myself)

I plan on getting at least a 4060 and I’m sitting on that for years. I’m on a 2060 right now.

My 2060 alone can run at least 85% of all games in my entire libraries across platforms. But I want at least 95% or 100%

Why not just get a 9060 xt and get that to 99%?(everything but ray tracing black myth wukong)

Why not - no?

I don’t even care for the monkey game.

Just that one particular game runs poorly on AMD. The new cards are pretty good, even at ray tracing now

Since when did gfx cards need to cost more than a used car?

We are being scammed by nvidia. They are selling stuff that 20 years ago, the equivalent would have been some massive research prototype. And there would be, like, 2 of them in an nvidia bunker somewhere powering deep thought whilst it calculated the meaning of life, the universe, and everything.

3k for a gfx card. Man my whole pc cost 500 quid and it runs all my games and pcvr just fine.

Could it run better? Sure

Does it need to? Not for 3 grand…

Fuck me!..

I haven’t bought a GPU since my beloved Vega 64 for $400 on Black Friday 2018, and the current prices are just horrifying. I’ll probably settle with midrange next build.

When advanced nodes stopped giving you Moore transistors per $

It covers the breadth of problems pretty well, but I feel compelled to point out that there are a few times where things are misrepresented in this post e.g.:

Newegg selling the ASUS ROG Astral GeForce RTX 5090 for $3,359 (MSRP: $1,999)

eBay Germany offering the same ASUS ROG Astral RTX 5090 for €3,349,95 (MSRP: €2,229)

The MSRP for a 5090 is $2k, but the MSRP for the 5090 Astral – a top-end card being used for overclocking world records – is $2.8k. I couldn’t quickly find the European MSRP but my money’s on it being more than 2.2k euro.

If you’re a creator, CUDA and NVENC are pretty much indispensable, or editing and exporting videos in Adobe Premiere or DaVinci Resolve will take you a lot longer[3]. Same for live streaming, as using NVENC in OBS offloads video rendering to the GPU for smooth frame rates while streaming high-quality video.

NVENC isn’t much of a moat right now, as both Intel and AMD’s encoders are roughly comparable in quality these days (including in Intel’s iGPUs!). There are cases where NVENC might do something specific better (like 4:2:2 support for prosumer/professional use cases) or have better software support in a specific program, but for common use cases like streaming/recording gameplay the alternatives should be roughly equivalent for most users.

as recently as May 2025 and I wasn’t surprised to find even RTX 40 series are still very much overpriced

Production apparently stopped on these for several months leading up to the 50-series launch; it seems unreasonable to harshly judge the pricing of a product that hasn’t had new stock for an extended period of time (of course, you can then judge either the decision to stop production or the still-elevated pricing of the 50 series).

DLSS is, and always was, snake oil

I personally find this take crazy given that DLSS2+ / FSR4+, when quality-biased, average visual quality comparable to native for most users in most situations and that was with DLSS2 in 2023, not even DLSS3 let alone DLSS4 (which is markedly better on average). I don’t really care how a frame is generated if it looks good enough (and doesn’t come with other notable downsides like latency). This almost feels like complaining about screen space reflections being “fake” reflections. Like yeah, it’s fake, but if the average player experience is consistently better with it than without it then what does it matter?

Increasingly complex manufacturing nodes are becoming increasingly expensive as all fuck. If it’s more cost-efficient to use some of that die area for specialized cores that can do high-quality upscaling instead of natively rendering everything with all the die space then that’s fine by me. I don’t think blaming DLSS (and its equivalents like FSR and XeSS) as “snake oil” is the right takeaway. If the options are (1) spend $X on a card that outputs 60 FPS natively or (2) spend $X on a card that outputs upscaled 80 FPS at quality good enough that I can’t tell it’s not native, then sign me the fuck up for option #2. For people less fussy about static image quality and more invested in smoothness, they can be perfectly happy with 100 FPS but marginally worse image quality. Not everyone is as sweaty about static image quality as some of us in the enthusiast crowd are.

There’s some fair points here about RT (though I find exclusively using path tracing for RT performance testing a little disingenuous given the performance gap), but if RT performance is the main complaint then why is the sub-heading “DLSS is, and always was, snake oil”?

obligatory: disagreeing with some of the author’s points is not the same as saying “Nvidia is great”

I think DLSS (and FSR and so on) are great value propositions but they become a problem when developers use them as a crutch. At the very least your game should not need them at all to run on high end hardware on max settings. With them then being options for people on lower end hardware to either lower settings or combine higher settings with upscaling. When they become mandatory they stop being a value proposition since the benefit stops being a benefit and starts just being neccesary for baseline performance.

They’re never mandatory. What are you talking about? Which games can’t run on a 5090 or even 5070 without DLSS?

Correct me if I am wrong but maybe they meant when Publisher/Devs list hardware requirement for their games and includes DLSS in the calculations. IIRC AssCreed Shadows and MH Wilds had that.

I don’t really care how a frame is generated if it looks good enough (and doesn’t come with other notable downsides like latency). This almost feels like complaining about screen space reflections being “fake” reflections. Like yeah, it’s fake, but if the average player experience is consistently better with it than without it then what does it matter?

But it does come with increased latency. It also disrupts the artistic vision of games. With MFG you’re seeing more fake frames than real frames. It’s deceptive and like snake oil in that Nvidia isn’t distinguishing between fake frames and real frames. I forget what the exact comparison is, but when they say “The RTX 5040 has the same performance as the RTX 4090” but that’s with 3 fake frames for every real frame, that’s incredibly deceptive.

He’s talking about DLSS upscaling - not DLSS Frame Generation - which doesn’t add latency.

It does add latency, you need 1-2ms to upscale the frame. However, if you are using a lower render resolution (instead of going up in resolution while rendering internally the same) then the latency will be lower because you have a higher frame rate

Yeah, so it doesn’t add latency. It takes like 1-2ms iirc in the pipeline, which like you said is less than/the same/negligibly more than it would take to render at the native resolution.

Which also means it’s not possible to use it to go to 1000 fps

So it has limits? Oh no…… At 1000fps you can’t do much rendering effects at all. Luckily no one, and I do literally mean no one, plays games at 1000fps.

Yes, but that also means there’s no FPS advantage at all at 500 Hz using DLSS and people do play at 500Hz

Thanks for providing insights and inviting a more nuanced discussion. I find it extremely frustrating that in communities like Lemmy it’s risky to write comments like this because people assume you’re “taking sides.”

The entire point of the community should be to have discourse about a topic and go into depth, yet most comments and indeed entire threads are just “Nvidia bad!” with more words.

Obligatory disclaimer that I, too, don’t necessarily side with Nvidia.

Have a 2070s. Been thinking for a while now my next card will be AMD. I hope they get back into the high end cards again :/

The 9070 XT is excellent and FSR 4 actually beats DLSS 4 in some important ways, like disocclusion.

Concur.

I went from a 2080 Super to the RX 9070 XT and it flies. Coupled with a 9950X3D, I still feel a little bit like the GPU might be the bottleneck, but it doesn’t matter. It plays everything I want at way more frames than I need (240 Hz monitor).

E.g., Rocket League went from struggling to keep 240 fps at lowest settings, to 700+ at max settings. Pretty stark improvement.

I went from a 2080 Super to the RX 9070 XT and it flies.

You went from a 7 year old GPU to a brand new top of the line one, what did you expect? That’s not a fair comparison lol. Got nothing to do with FSR4 vs DLSS4.

what did you expect?

I expected as much. 👍

The thing to which I was concurring was simply that they said the 9070 was excellent.

nothing to do with FSR4 vs DLSS4

The 2080 Super supports DLSS. 🤷♂️

I’m just posting an anecdote, bro. Chill.

Also the 2080 Super was released in 2019, not 2018. 👍

AMD only releases high end for servers and high end workstations

Bought my first AMD card last year, never looked back

AMD’s Windows drivers are a little rough, but the open source drivers on Linux are spectacular.

I wish I had the money to change to AMD

This is a sentence I never thought I would read.

^(AMD used to be cheap)^

Is it because it’s not how they make money now?

And only toke 15 years to figure it out?

My last nvidia card was gtx 980.I bought two of them. After i heard about 970 scandal. It didnt directly affect me but fuck nvidia for pulling that shit. Havent bought anything from them. Stopped playing games on pc afterwards, just occasionally on console and laptop igpu.

AMD & Intel ARC are king now. All that CUDA nonsense, is just price-hiking justification

Nvidia is using the “its fake news” strategy now? My how the mighty have fallen.

I’ve said it many times but publicly traded companies are destroying the world. The fact they have to increase revenue every single year is not sustainable and just leads to employees being underpaid, products that are built cheaper and invasive data collection to offset their previous poor decisions.

Those 4% can make an RTX 5070 Ti perform at the levels of an RTX 4070 Ti Super, completely eradicating the reason you’d get an RTX 5070 Ti in the first place.

You’d buy a 5070 Ti for a 4% increase in performance over the 4070 Ti Super you already had? Ok.

They probably mean the majority of people, not 4070 Ti owners. For them, buying that 4070 Ti would be a better choice already.

After being AMD for years recently went back to nvidia for one reason. nvenc works way better for encoding livestreams and videos than amd

https://youtube.com/watch?v=kkf7q4L5xl8

Fixed in 9000 series

You don’t need NVENC, the AMD and Intel versions are very good. If you care about maximum quality you would software encode for the best compression